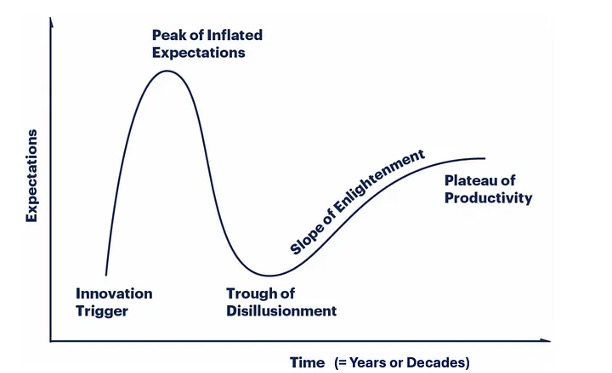

The Gartner Hype Cycle is dead. It was a useful framework for decades, a way to visualize how technologies move from initial innovation through expectation inflation, disappointment, and eventually maturity. But it was always built on a particular cadence of innovation—one where meaningful breakthroughs happened on a scale of years to decades. Cloud computing spent roughly two decades moving through that cycle. Smartphones took about ten years. The framework worked because the pace of meaningful technological change was measured enough that you could actually plot the trajectory.

Then 2023 and 2024 happened. GPT-3.5, GPT-4, Midjourney, Claude (multiple generations), Gemini, SORA, and a dozen other breakthrough models arrived in the span of months. Not incremental improvements. Legitimate "this changes how we think about the problem" breakthroughs. Each one would have been a separate hype cycle point in the old framework. Instead, they're arriving at a velocity that makes the entire concept of the cycle meaningless.

The market is now permanently stuck at the Peak of Inflated Expectations. You can't move through the trough of disillusionment because before you even settle into disappointment about one capability, three new model releases have arrived offering substantially better performance in new domains. The cycle is broken because the cycle assumes you eventually reach a stability point where the market understands what the technology can and can't do. In AI, that moment never comes because the technology's capabilities keep reshaping the conversation.

Look at what this means practically. The old framework would show smooth curves—an adoption path you could plan against. "This technology will be mature in five years" gave you a mental model and a roadmap. With AI, there is no roadmap. There are only minor disturbances in an otherwise chaotic landscape. Someone releases a better model, the market rebounds, expectations reset upward, and we continue. The Hype Cycle's core assumption—that there's a predictable path from hype to reality—simply doesn't apply when "reality" keeps changing faster than organizations can implement it.

The framework also can't measure technological maturity when breakthroughs arrive weekly. In traditional tech cycles, you measure maturity through adoption curves, standardization, and price compression. But how do you measure maturity when the baseline capability set is continuously expanding? The AI landscape in 2024 doesn't look like it's maturing—it looks like it's spinning out of control. That's not necessarily pessimistic; it's just a different phenomenon that the Hype Cycle was never designed to capture.

Consider the sheer proliferation of specialized models. Hugging Face hosts over 500,000 AI models now. Half a million options ranging from general-purpose LLMs to models specialized for specific medical domains, specific scientific problems, specific business workflows. In the Gartner framework, you'd expect consolidation around a few successful implementations as a technology matures. Instead, we're seeing explosive diversification. The market isn't finding a stable equilibrium; it's fracturing into thousands of specialized applications, each with its own maturity curve, each with its own adoption timeline.

The real takeaway isn't that the Hype Cycle is useless going forward—though it is, for AI specifically. The takeaway is that we're operating in a paradigm shift. The technology is changing too fast for traditional analytical frameworks to keep pace. We need new models for thinking about technological adoption, capability assessment, and organizational readiness that don't assume stability. The Gartner Hype Cycle was built for a different era of innovation. AI didn't just move through the cycle faster than expected. It broke the cycle entirely.